|

I am a CS PhD student at Stanford University, advised by Prof. Ehsan Adeli in the STAI Lab. My research focuses on video generation and understanding, world modeling, and Embodied AI. I am also interested in their real-world applications, such as healthcare. Before Stanford, I received my master's degree from the CMU Robotics Institute, where I worked on 3D vision with Prof. Laszlo Jeni. I also collaborated closely with Prof. Berkin Bilgic at Harvard Medical School on MRI reconstruction, and with Prof. Cheng Jin at Shanghai Jiao Tong University on medical vision. I obtained my bachelor's degree from Tsinghua University, majoring in Automation with a second major in Economics and Management. My long-term goal is to build AI systems that are both technically strong and practically useful, especially in embodied perception, visual generation, and healthcare settings. I enjoy working with motivated people across academia and industry. If you'd like to collaborate, feel free to reach out. yuheng[at]stanford.edu CV / Google Scholar / GitHub / LinkedIn |

|

|

I am primarily interested in video generation and understanding, world modeling, and Embodied AI. More broadly, I want to build visual intelligence systems that can model dynamic environments, understand how the world evolves over time, and support decision-making and interaction in open-world settings. |

|

|

Reviewer for CVPR, ICCV, ECCV, NeurIPS, SIGGRAPH, MICCAI, ISBI, Computer Graphics Forum, and ISMRM. |

|

* indicates co-first author. Please see my Google Scholar for the full publication list. |

|

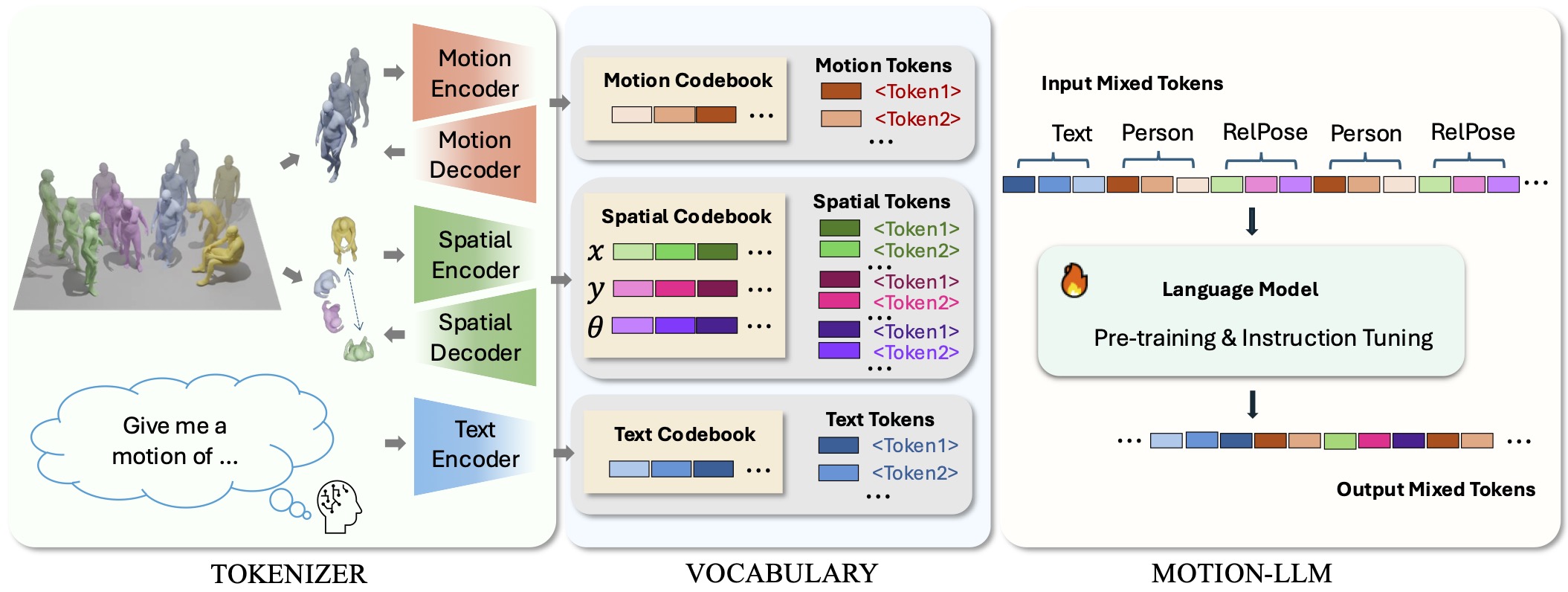

Heng Yu*, Juze Zhang*, Changan Chen, Tiange Xiang, Yusu Fang, Juan Carlos Niebles, Ehsan Adeli 3DV 2026 paper / project page SocialGen is the first unified motion-language model for multi-human interactions, enabling state-of-the-art social motion modeling with a new representation, benchmark, and dataset. |

|

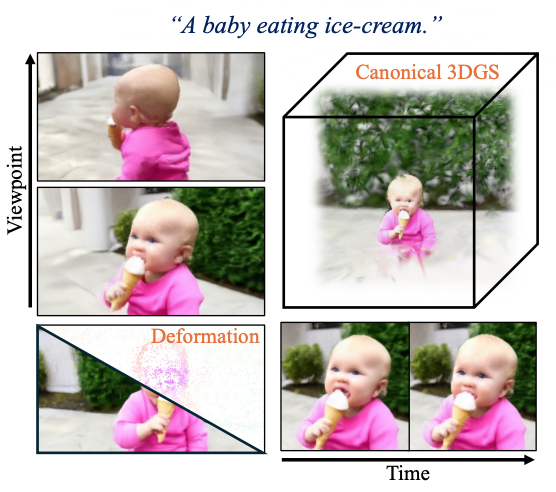

Heng Yu*, Chaoyang Wang*, Peiye Zhuang, Willi Menapace, Aliaksandr Siarohin, Junli Cao, László A. Jeni, Sergey Tulyakov, Hsin-Ying Lee NeurIPS 2024 paper / project page We propose 4Real, the first photorealistic text-to-4D scene generation pipeline. |

|

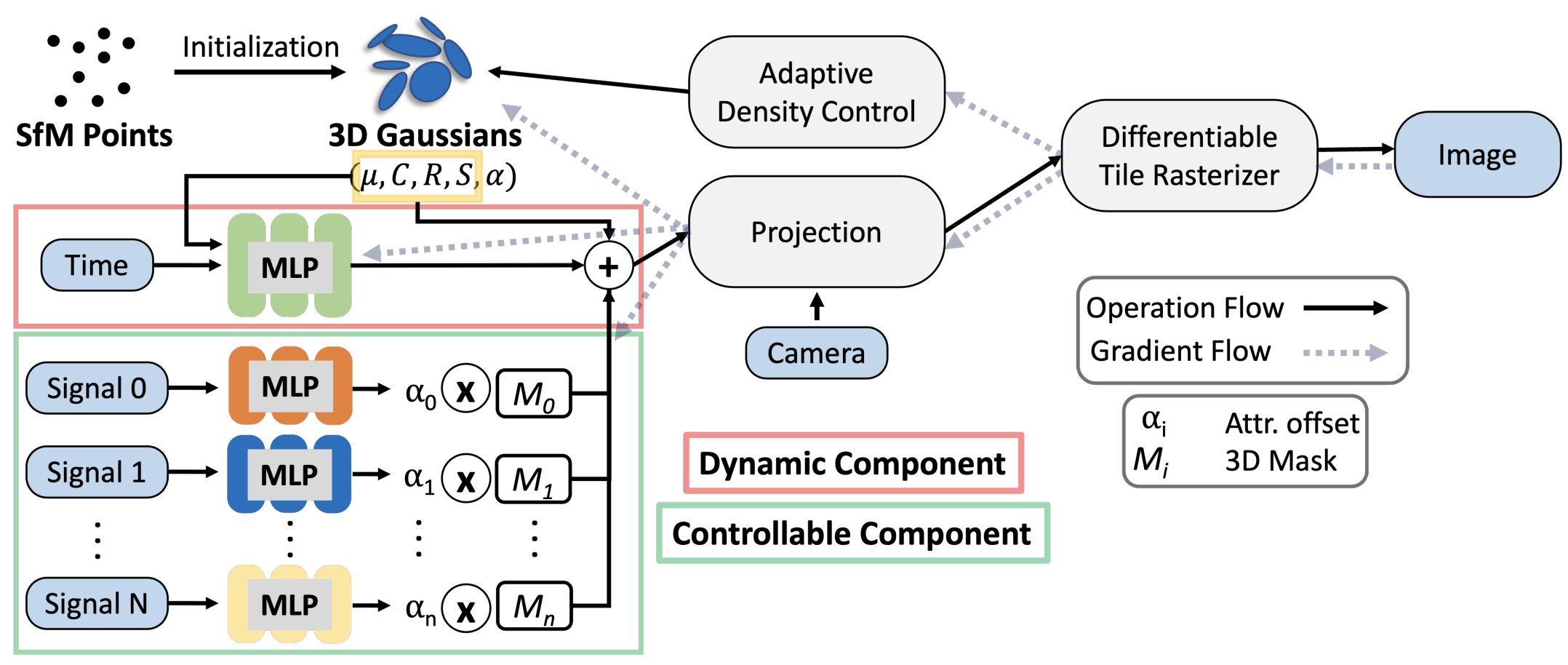

Heng Yu, Joel Julin, Zoltan Adam Milacski, Koichiro Niinuma, László A. Jeni CVPR 2024 paper / project page / code CoGS enables controllable Gaussian Splatting for dynamic scenes with direct scene manipulation and real-time control. |

|

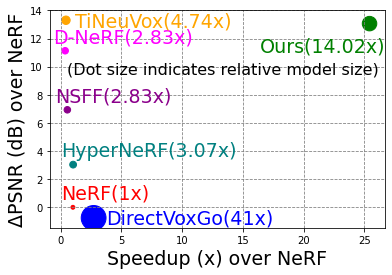

Heng Yu, Joel Julin, Zoltan Adam Milacski, Koichiro Niinuma, László A. Jeni CVPR 2023 paper / project page / code / CMU RI News DyLiN extends light field networks to dynamic, non-rigid scenes with strong visual fidelity and efficiency. |

|

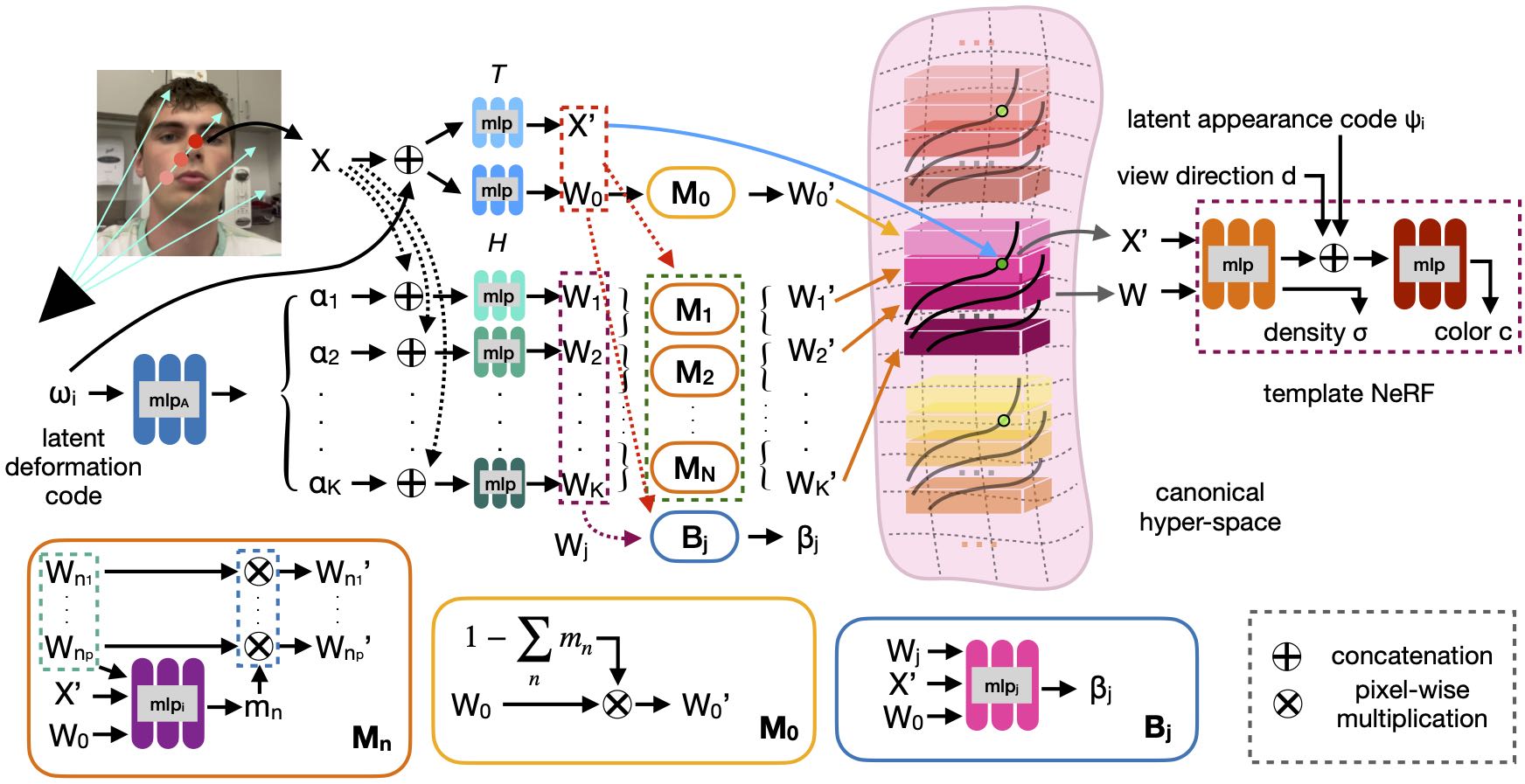

Heng Yu, Koichiro Niinuma, László A. Jeni FG 2023 - Best Paper Award Finalist paper / project page / code CoNFies is a fully automatic controllable neural representation for face self-portraits. |

|

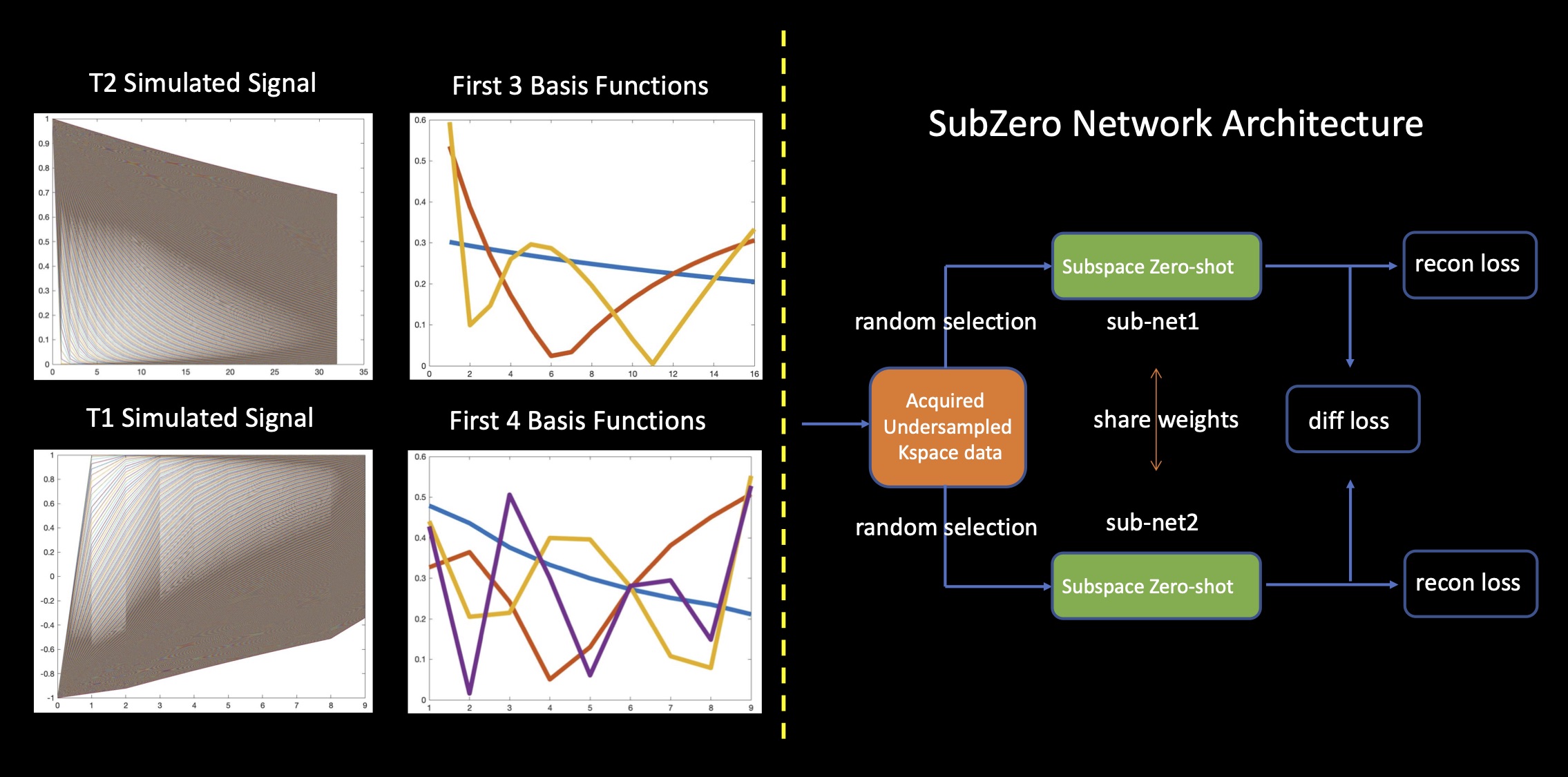

Heng Yu, Yamin Arefeen, Berkin Bilgic ISMRM 2023 - Power Pitch paper / code SubZero improves subspace-based zero-shot self-supervised MRI reconstruction with a parallel architecture and attention mechanism. |

|

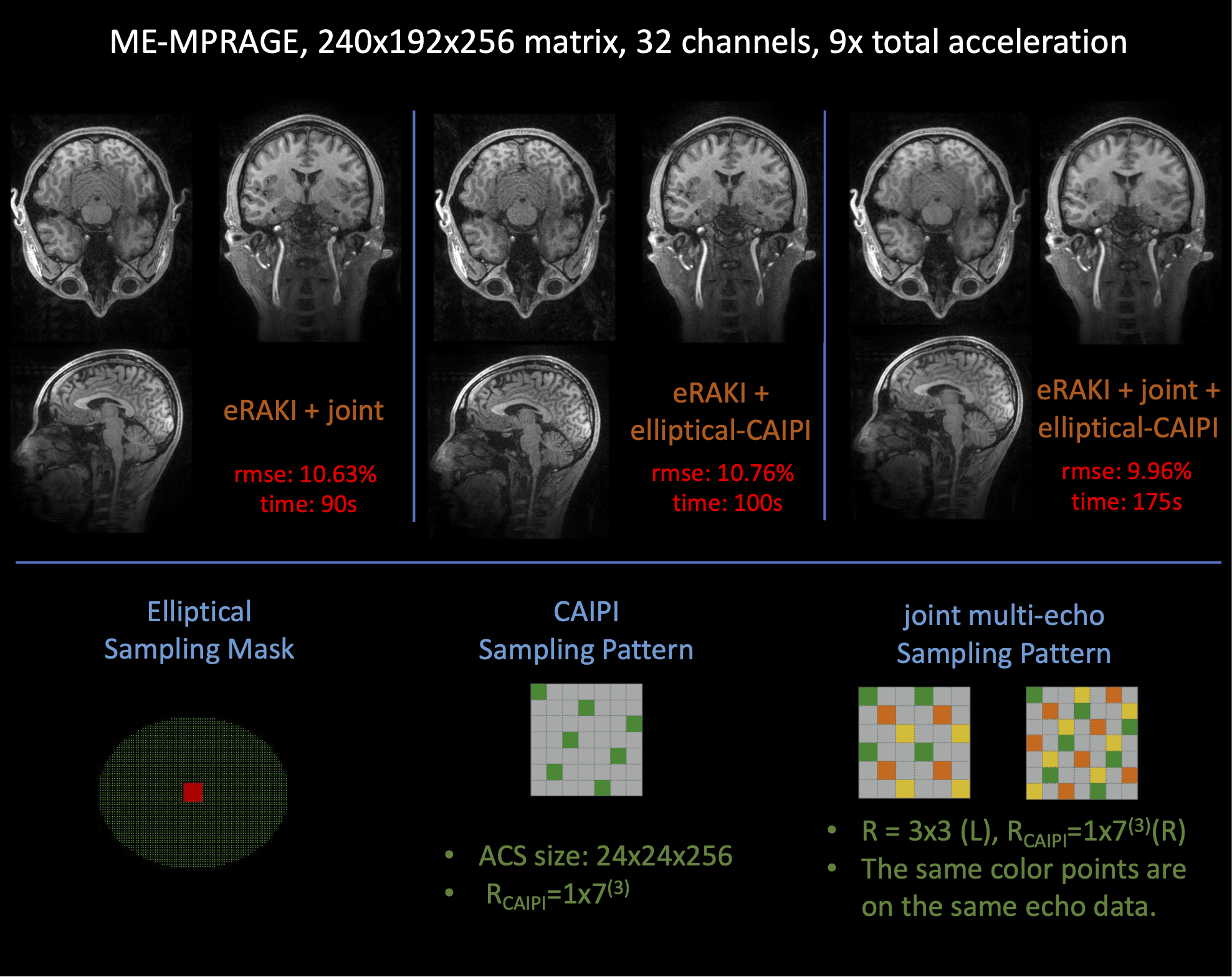

Heng Yu, Zijing Dong, Yamin Arefeen, Congyu Liao, Kawin Setsompop, Berkin Bilgic ISMRM 2021 - Oral Presentation paper / code eRAKI accelerates RAKI by directly learning a coil-combined target for robust and efficient MRI reconstruction. |

|

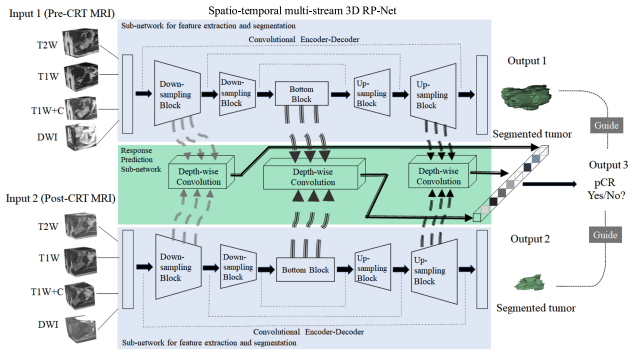

Cheng Jin*, Heng Yu*, Jia Ke*, Peirong Ding*, Yongju Yi, Xiaofeng Jiang, Xin Duan, Jinghua Tang, Daniel T. Chang, Xiaojian Wu, Feng Gao, Ruijiang Li Nature Communications 2021 paper / code A multi-task deep learning framework for tumor segmentation and treatment response prediction from longitudinal medical images. |

|

|

Website template adapted from Jon Barron. |